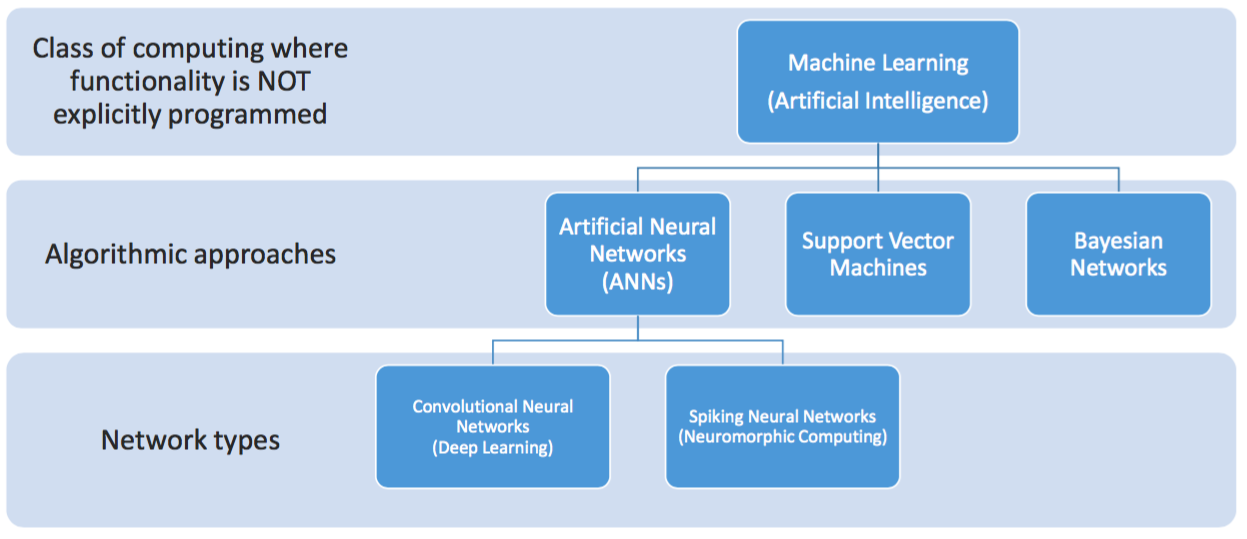

Convolutional neural networks (CNNs) may be the hot artificial intelligence (AI) technology of the moment, in no small part due to their compatibility for both training and inference functions with existing GPUs, FPGAs and DSPs as accelerators, but they're not the only game in town. Witness, for example, Australia-based startup BrainChip Holdings and its alternative proprietary spiking neural network (SNN) technology (Figure 1). Now armed with both foundation software and acceleration hardware, BrainChip is striving to translate its claims of training-speed and accuracy superiority in certain applications into market success.

Figure 1. Convolutional neural networks are today's dominant, but not the sole, approach to machine learning.

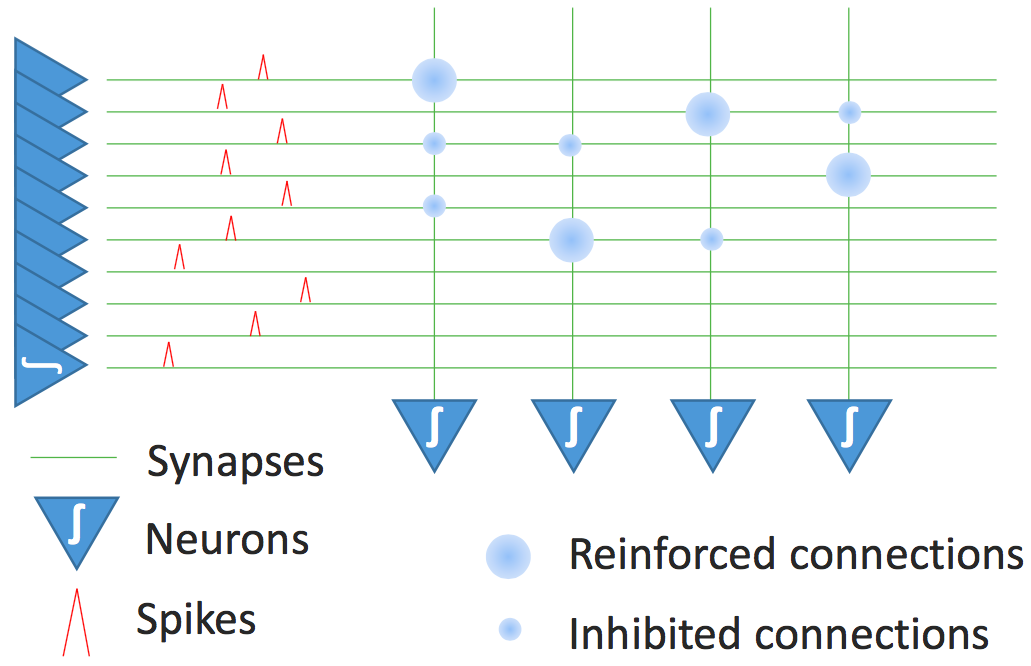

SNNs incorporate not only the intensity but also the timing and duration of a recognition pulse into their neural network modeling approach. In striving to more accurately model the memory storage and recall functions of neural synapses in the biological brain, they're one example of a class of machine learning techniques commonly referred to as neuromorphic computing, which also includes research projects such as IBM's TrueNorth, Stanford's Brainstorm and the Human Brain Project. As described by Bob Beachler, BrainChip's Senior Vice President of Marketing and Business Development in a recent series of briefings, his company's SNN technology, like the human brain, doesn't "think" using math functions; instead, it leverages spike trains and threshold logic (Figure 2).

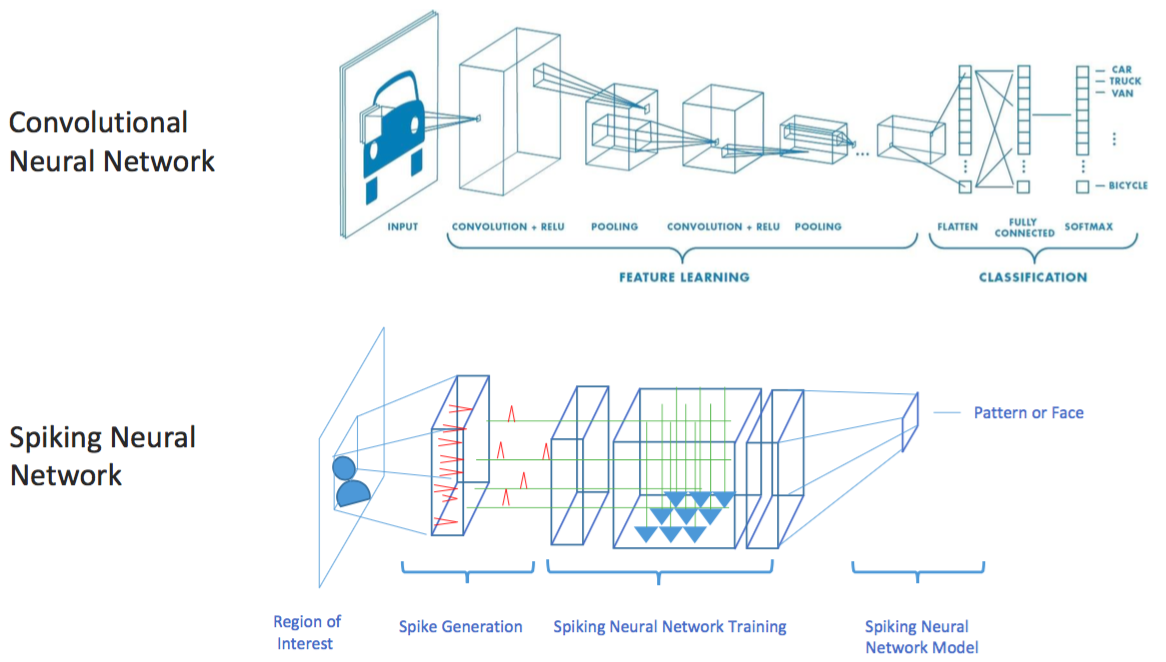

Figure 2. Spiking neural networks strive to emulate the human brain's function, with software and silicon equivalents to variable-intensity and varying-time spiking inputs, synapses, reinforced and inhibited interconnects, and variable-threshold neurons (top). While they excel, according to BrainChip, at identifying objects after initial one-shot training with low-resolution and noisy source images, a CNN or alternative deep learning approach may be preferable for fine-resolution pattern matching and/or large data set training situations (bottom).

Initial model training is based on the reinforcement and inhibition of synaptic connections and neuron thresholds, an approach that is inherently feed-forward in nature (versus the back-propagation intrinsic to CNNs) and can involve both intensity and time dependencies. SNNs, according to Beachler, excel at finding patterns in noisy environments, require only a single low-resolution image for "one-shot" training (although feeding the model with multiple versions of the image at different resolutions and orientations will further improve the accuracy results), and have low computational requirements. Conversely, he admits, CNNs excel (at the tradeoffs of lengthy initial training and comparatively high computation needs for both training and subsequent inference) in situations where large numbers and types of objects need to be recognized, with one extreme example being the 150,000 image/1,000 category data set that formed the foundation of the 2017 ImageNet Large Scale Visual Recognition Challenge.

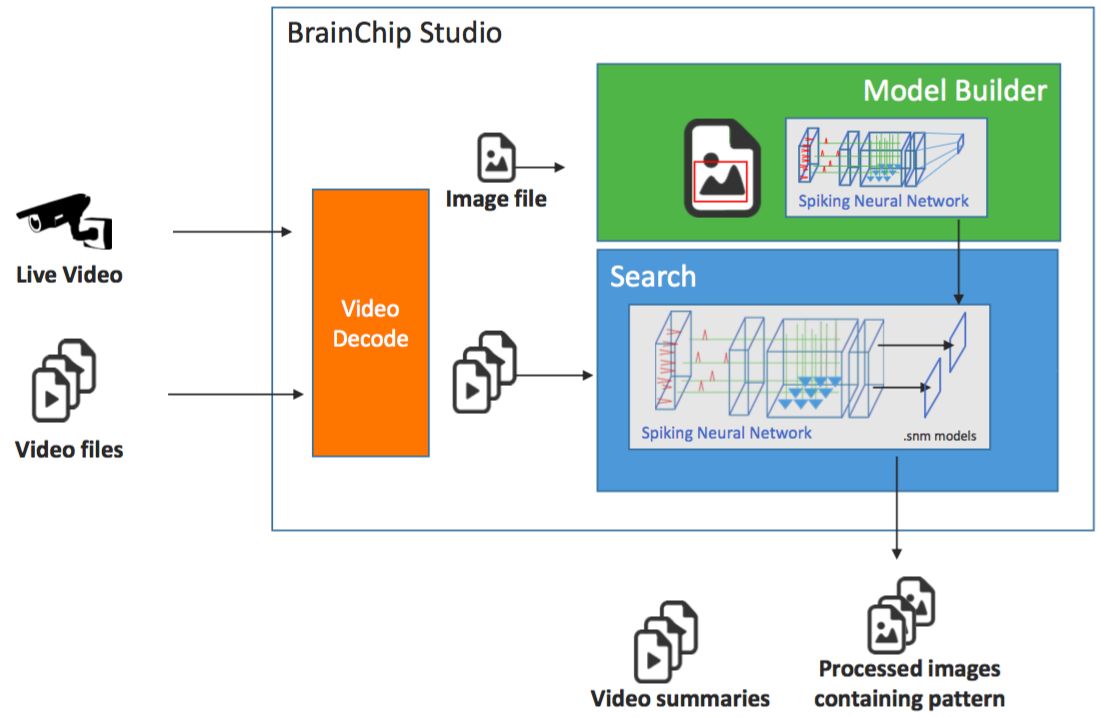

BrainChip's initial target applications involve video surveillance in law enforcement, military and commercial (retail, casino, etc.) markets, which according to Beachler offer the best chance of success for his company given its current modest headcount. BrainChip's foundation software product intended for customer deployments, BrainChip Studio, was released in mid-July after a lengthy beta and application-tailoring process. Targeted at both forensic object/pattern search and face detection and classification usage scenarios, it runs on both Windows and Linux operating systems and is fully CPU-based (although it leverages the video processing cores in modern AMD and Intel x86 CPUs for decode, scaling, rotation and other offload tasks). Common to both usage scenarios are the facts that SNNs fundamentally recognize shapes and patterns, and that important objects (from pre-training) get higher reinforcement; analogously, thanks to evolutionary adaptation, the human visual system is particularly adept at detecting s-shaped objects such as snakes. Conversely, when analysis of fine-resolution detail is required, such as with emotion discernment and other facial biometrics, a CNN or other technique may be preferable.

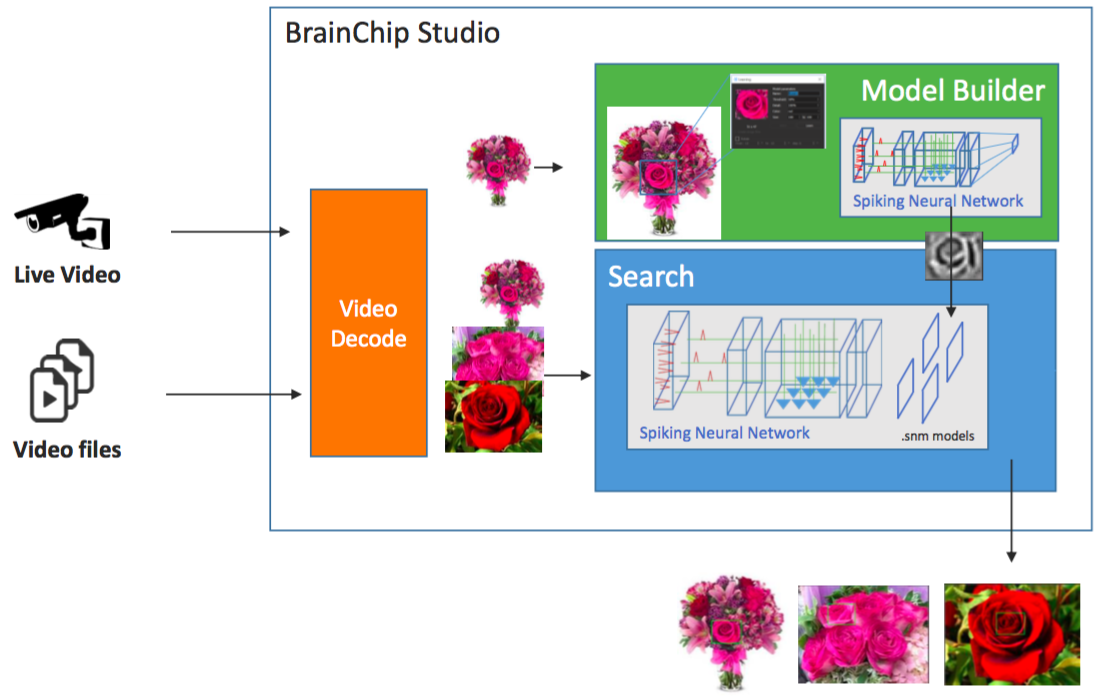

Figure 3 shows one example object/pattern recognition usage scenario. The SNN is initially trained by means of a reference image (ideally provided at various scale sizes and rotations), which generates the spike pattern map that builds the model. Subsequent video (live and/or pre-recorded) fed to the model generates both video summaries and processed still frame images containing candidate object/pattern matches. Adjustable thresholds in the software affect the match probability and therefore the number of candidate options presented.

Figure 3. After initially being fed with a one-shot training image, ideally at multiple scales and orientations, BrainChip Studio can subsequently identify potential match candidates (top). One example scenario involves finding matches for a rose (bottom).

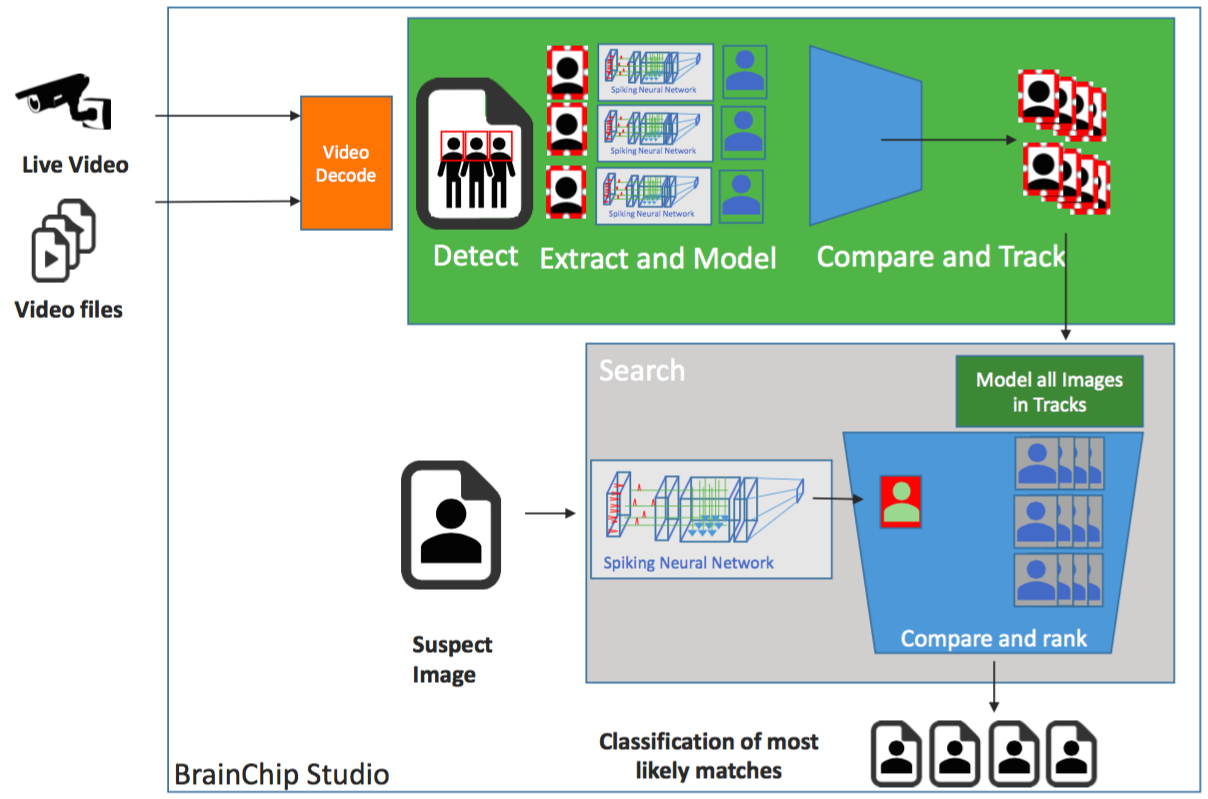

BrainChip has also added specific features to Studio to aid in facial detection and classification, targeting unique identification as well as enabling multiple face detections per frame and the classification of faces into similar groups. In the usage scenario shown in Figure 4, these features are employed to extract candidate faces from multiple surveillance video feeds. These candidates are then compared against the image of a suspect that's being investigated, and Studio identifies the most likely candidates in the surveillance footage.

Figure 4. Additional features added to Studio specifically for face detection and recognition purposes can, for example, identify a suspect in a series of surveillance camera recordings.

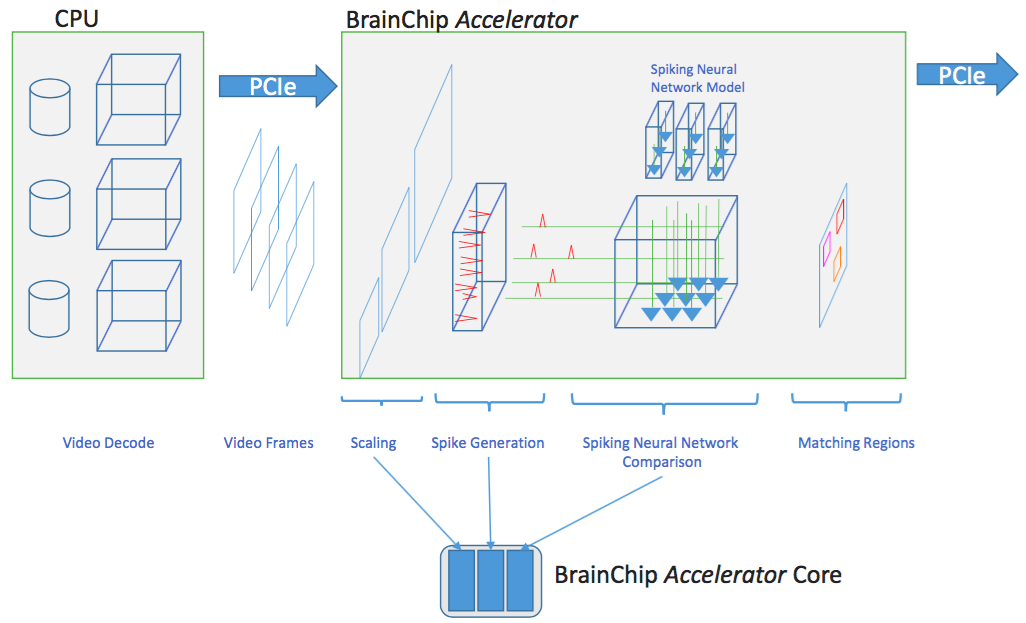

For scenarios where large numbers of video channels need to be processed or where faster match results are needed, BrainChip more recently unveiled its Accelerator, an 8-lane PCI Express Gen3 half-height low profile add-in card that works in conjunction with Studio (Figure 5). Accelerator can simultaneously process up to 16 channels of video, with a peak total throughput of over 600 images per second. Each SNN acceleration core consumes less than 1 W of power; six parallel processing cores fit within the Xilinx Kintex KU115 FPGA on the board, whose total power consumption of sub-15 W requires only passive cooling. The bulk of the DSP slices on the FPGA are unused, aside from a subset of these resources employed for video scaling. Unlike a CNN, SNNs don't leverage multiply-accumulate-centric matrix multiplication and linear algebra functions; predominantly, therefore SSNs harness the FPGA's interconnect fabric as silicon synapses, with look-up tables used to emulate synapse connections and neurons (Figure 2).

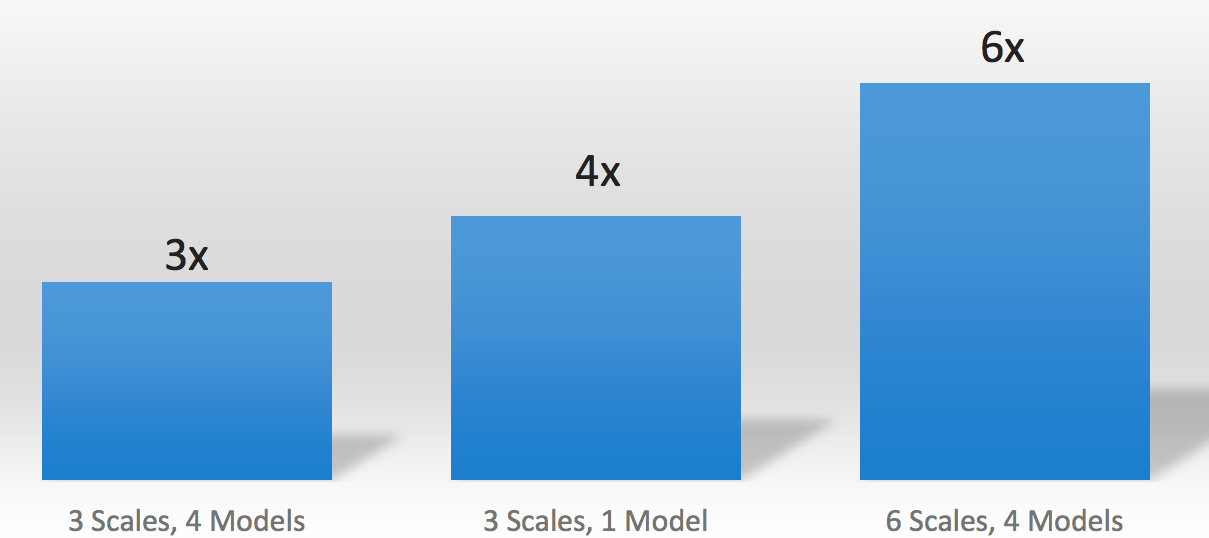

Figure 5. BrainChip's Accelerator card, based on a six-core SNN processor implementation in a Xilinx FPGA (top), offloads SNN functions from the host CPU (middle), significantly boosting throughput (bottom) (the reference for comparison is an Intel Core i7 CPU containing 4 physical cores, along with HyperThreading virtual core support, and running at 2.5 GHz).

BrainChip Studio is now available at a cost of $4,000 per video channel. The BrainChip Accelerator add-in card, scheduled for availability beginning at the end of this month, costs $10,000 and will initially be sold to system integrators; in the future, BrainChip may also offer integrated card-plus-software-plus-server bundles directly to end users. And what about licensing the SNN acceleration core found inside the Xilinx FPGA? When asked this question, Beachler initially reminded InsideDSP of his company's current small size, and the consequent need to focus on highest "bang for the buck" near-term opportunities. With that said, he acknowledged that he'd be foolish to reject potential licensee interest outright; the company's initial business plan, in fact, was to be an IP licensing entity.

Add new comment