Want computer vision in your product? Wonder how to use deep learning? Engage the experts at BDTI.

Advances in technology are making science fiction a reality—today. With the incredible power and low cost of new processors, programmable logic, and digital video cameras, it is now possible to add computer vision to nearly any product—scientific, medical, industrial, and consumer. Whether it’s a toy that can “see” and recognize objects, a video camera that can count the number and type of people in a scene, or an industrial system that visually inspects products for defects, computer vision can enable unheard-of new functionality in your product, letting you tackle new markets and seize new opportunities.

But like most new technologies, computer vision and deep learning demand a special skill set. At the heart of computer vision is signal processing: signal processing is used at the pixel level to extract objects from pixels, and signal processing is used at the object level to track, evaluate, and “understand” the behavior of objects. For the specialized experience and expertise needed for computer vision, you can rely on BDTI.

Got a question? Ask the experts. Want to see our track record? See examples of projects we've done for customers.

How BDTI helps companies with computer vision and deep learning

Computer vision processor selection

Computer vision processor selection

- Broad knowledge of current processing platform options—to quickly identify viable candidates

- Hands-on experience with dozens of processors—chips, cores, programmable logic, and others—to provide insight into their true strengths and weaknesses

- Understanding of vendor strategies—to anticipate which processors are likely to remain viable for the long-term

Computer vision algorithm design

- Draw on its knowledge of digital signal processing and computer vision fundamentals to design and implement an algorithm that meets your requirements

- Ensure the algorithm can be efficiently implemented on your processor

Computer vision software implementation and optimization

- Designing a software architecture and data flow to take maximum advantage of processor resources

- Selecting appropriate data types to avoid wasting precious resources

- Modifying, substituting, or combining algorithms

- Mapping the chosen algorithms into processor instructions cleanly and efficiently, using the most appropriate languages and tools capabilities.

Design and implementation of deep learning

Deep learning techniques—often implemented via neural network processing frameworks such as convolutional neural networks or “CNNs” and deep neural networks or "DNNs"—are rapidly becoming a key technology for computer vision.

Need to understand how deep learning could be implemented through a neural network in your application? BDTI can help. BDTI has years of experience in creating and optimizing algorithms to run efficiently on a wide variety of processing platforms.

Where do you need help? Let us know.

Our team and track record

BDTI’s team of engineers has years of experience building complex, reliable, and low-cost signal processing systems, including computer vision applications. We will work with you to understand your application, identify your requirements, select the best technology—hardware and software—and then design and build your system. We can work closely with your existing engineering team, handling only the computer vision portion of the design, or we can execute a complete turn-key project, delivering a complete, finished system. We can use existing IP or develop custom algorithms for your application.

Examples of recent projects include:

Implementation and optimization of vision algorithms for Tango, Google's 3D sensing technology. BDTI obtained reference algorithms and heavily refactored them to support efficient implementation on the target hardware, then worked closely with the algorithm designer to understand and implement various speed, power, and accuracy tradeoffs. Next, BDTI implemented and optimized the software on the SoC's DSP and CPU, and supported integration and validation. BDTI’s unique combination of expertise in computer vision algorithms and understanding of processor architectures enabled Lenovo to ship the first smartphone with Tango 3D vision technology—on-time.

Implementation and optimization of vision algorithms for Tango, Google's 3D sensing technology. BDTI obtained reference algorithms and heavily refactored them to support efficient implementation on the target hardware, then worked closely with the algorithm designer to understand and implement various speed, power, and accuracy tradeoffs. Next, BDTI implemented and optimized the software on the SoC's DSP and CPU, and supported integration and validation. BDTI’s unique combination of expertise in computer vision algorithms and understanding of processor architectures enabled Lenovo to ship the first smartphone with Tango 3D vision technology—on-time. Design of a vision-based detection and tracking system. BDTI performed a detailed analysis of the client's algorithms to assess performance requirements, then leveraged relationships with vendors to identify a platform that met size, power consumption, and performance requirements. Next, BDTI proposed algorithm changes and created highly optimized software utilizing an on-chip co-processor. BDTI's knowledge of processors, computer vision algorithms, and optimization techniques enabled the customer to win a government contract.

Design of a vision-based detection and tracking system. BDTI performed a detailed analysis of the client's algorithms to assess performance requirements, then leveraged relationships with vendors to identify a platform that met size, power consumption, and performance requirements. Next, BDTI proposed algorithm changes and created highly optimized software utilizing an on-chip co-processor. BDTI's knowledge of processors, computer vision algorithms, and optimization techniques enabled the customer to win a government contract. Design and implementation of a unique motion analysis algorithm for efficient execution on the Hexagon DSP embedded in the Qualcomm Snapdragon mobile processor. BDTI created a unique variant of optical flow that combines high accuracy with lower processing demands, then implemented and optimized the algorithm to run optimally on the Hexagon DSP using HVX (Hexagon Vector eXtensions). BDTI's expertise in algorithm design and understanding of processor architectures enabled the customer to demonstrate that its low-power Hexagon DSP processor supports demanding applications. (In the image at right, the color and intensity indicate direction and speed of motion.)

Design and implementation of a unique motion analysis algorithm for efficient execution on the Hexagon DSP embedded in the Qualcomm Snapdragon mobile processor. BDTI created a unique variant of optical flow that combines high accuracy with lower processing demands, then implemented and optimized the algorithm to run optimally on the Hexagon DSP using HVX (Hexagon Vector eXtensions). BDTI's expertise in algorithm design and understanding of processor architectures enabled the customer to demonstrate that its low-power Hexagon DSP processor supports demanding applications. (In the image at right, the color and intensity indicate direction and speed of motion.) Design and implementation of a neural network pedestrian detection application for an embedded processor. BDTI engineers analyzed literature to select a suitable CNN architecture starting point, then with target hardware in mind, experimented with network designs in order to reduce computational demand while maintaining acceptable accuracy. Next, BDTI iterated the network design, re-train each to deliver a final network design and trained weights that met customer needs. BDTI's knowledge of cutting-edge algorithms enabled creation of a CNN-based pedestrian detection application that exceeds customer expectations.

Design and implementation of a neural network pedestrian detection application for an embedded processor. BDTI engineers analyzed literature to select a suitable CNN architecture starting point, then with target hardware in mind, experimented with network designs in order to reduce computational demand while maintaining acceptable accuracy. Next, BDTI iterated the network design, re-train each to deliver a final network design and trained weights that met customer needs. BDTI's knowledge of cutting-edge algorithms enabled creation of a CNN-based pedestrian detection application that exceeds customer expectations.

Our track record also includes:

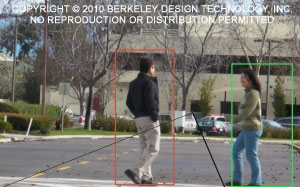

Prototyping of computer-vision-based pedestrian detection and road sign recognition algorithms for automotive applications. Built for a major semiconductor company using a hybrid ARM/FPGA processing platform. (In the image at right, the software identifies a pedestrian in the “danger zone” and marks him with a red box. A pedestrian outside the “danger zone” is indicated by a green box.)

Prototyping of computer-vision-based pedestrian detection and road sign recognition algorithms for automotive applications. Built for a major semiconductor company using a hybrid ARM/FPGA processing platform. (In the image at right, the software identifies a pedestrian in the “danger zone” and marks him with a red box. A pedestrian outside the “danger zone” is indicated by a green box.)- A mathematical model for a vision-based feedback control system, developed for a scientific instrumentation company. BDTI's work shaved weeks off the company’s development time and enabling the company to improve throughput of its equipment.

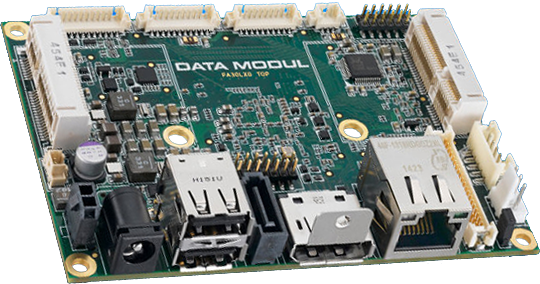

A complete demonstration system for a new processor targeting industrial machine vision applications, built for a global processor vendor. The demo shows the usefulness of a new, unique processor architecture that incorporates a configurable vision processing pipeline. (Read about the demo.)

A complete demonstration system for a new processor targeting industrial machine vision applications, built for a global processor vendor. The demo shows the usefulness of a new, unique processor architecture that incorporates a configurable vision processing pipeline. (Read about the demo.)- Highly efficient image processing algorithms that applied sophisticated visual effects to digital photographs, using an ultra-low-cost embedded processor. This sophisticated functionality enabled the customer to build a toy smart camera.

- A complex, demanding optical flow algorithm, used to detect apparent motion in an HD video stream. Designed and implemented for testing high-level synthesis tools.

See videos of BDTI demonstrating vision applications:

At the 2013 Embedded Vision Summit, BDTI showed two vision applications: a “dice counting” application and a color-based object detection application. The “dice counting” application employs edge detection and other computer vision techniques to count dots on dice quicker than a human possibly could. This application was designed, implemented, and optimized on an Analog Devices ADSP-BF609, using its Pipeline Vision Processor that provides hardware support for high definition video analytics. The color-based object detection algorithm, designed by BDTI, was implemented and optimized to run on the Hexagon DSP embedded in the Qualcomm Snapdragon mobile processor.

At the 2013 Embedded Vision Summit, BDTI showed two vision applications: a “dice counting” application and a color-based object detection application. The “dice counting” application employs edge detection and other computer vision techniques to count dots on dice quicker than a human possibly could. This application was designed, implemented, and optimized on an Analog Devices ADSP-BF609, using its Pipeline Vision Processor that provides hardware support for high definition video analytics. The color-based object detection algorithm, designed by BDTI, was implemented and optimized to run on the Hexagon DSP embedded in the Qualcomm Snapdragon mobile processor. At the 2015 Embedded Vision Summit, BDTI showed two vision applications: one uses background subtraction techniques and the other uses motion-based object detection combined with augmented reality effects. The background subtraction algorithm was implemented and optimized to run on the Adreno GPU embedded in the Qualcomm Snapdragon mobile processor and demonstrates the capabilities of the GPU for computer vision tasks. The motion-based object detection algorithm was implemented in OpenCV on an x86-based processor, then optimized and accelerated using OpenCL.

At the 2015 Embedded Vision Summit, BDTI showed two vision applications: one uses background subtraction techniques and the other uses motion-based object detection combined with augmented reality effects. The background subtraction algorithm was implemented and optimized to run on the Adreno GPU embedded in the Qualcomm Snapdragon mobile processor and demonstrates the capabilities of the GPU for computer vision tasks. The motion-based object detection algorithm was implemented in OpenCV on an x86-based processor, then optimized and accelerated using OpenCL. At the March 2016 Embedded Vision Alliance Member Meeting, BDTI showed a dense optical flow algorithm running on the Qualcomm Snapdragon mobile processor. This computationally demanding algorithm was implemented and optimized to run at 20 frames per second on the Hexagon DSP in the Qualcomm Snapdragon 820 through use of the Hexagon Vector eXtensions (HVX) library.

At the March 2016 Embedded Vision Alliance Member Meeting, BDTI showed a dense optical flow algorithm running on the Qualcomm Snapdragon mobile processor. This computationally demanding algorithm was implemented and optimized to run at 20 frames per second on the Hexagon DSP in the Qualcomm Snapdragon 820 through use of the Hexagon Vector eXtensions (HVX) library.- At the 2017 Embedded Vision Summit, BDTI showed three demos.

First, BDTI demonstrated the company's capability to create efficient implementations of computer vision algorithms on low-cost processors by showing a face detection application running on a low-cost Renesas RZG family processor. The face detection algorithm was implemented by BDTI to run in real time with minimal processor resource use, demonstrating that the right skills can enable computer vision even on low cost-microprocessors, bringing sophisticated functionality to more products.

First, BDTI demonstrated the company's capability to create efficient implementations of computer vision algorithms on low-cost processors by showing a face detection application running on a low-cost Renesas RZG family processor. The face detection algorithm was implemented by BDTI to run in real time with minimal processor resource use, demonstrating that the right skills can enable computer vision even on low cost-microprocessors, bringing sophisticated functionality to more products.

As its second demo, BDTI demonstrated how it created an implementation of Tango, Google’s 3D sensing technology, running on the Lenovo Phab 2 Pro smartphone. This successful product was the result of BDTI’s unique expertise: understanding of complex compute-intensive computer vision algorithms and how to map them efficiently onto specialized processor architectures. To obtain real-time performance, BDTI engineers carefully re-architected Tango algorithms to take advantage of the heterogeneous processing resources on the Qualcomm Snapdragon 652 processor used in the Lenovo smartphone.

As its second demo, BDTI demonstrated how it created an implementation of Tango, Google’s 3D sensing technology, running on the Lenovo Phab 2 Pro smartphone. This successful product was the result of BDTI’s unique expertise: understanding of complex compute-intensive computer vision algorithms and how to map them efficiently onto specialized processor architectures. To obtain real-time performance, BDTI engineers carefully re-architected Tango algorithms to take advantage of the heterogeneous processing resources on the Qualcomm Snapdragon 652 processor used in the Lenovo smartphone.

For its third demo, BDTI showed an example of the company's machine learning engineering services. The demo highlights BDTI’s ability to design, train, and implement neural networks for specific use cases. For this demo, BDTI engineers implemented YOLO (You Only Look Once), an approach in which classification and detection is performed by a single neural network in a single pass, resulting in very fast detection. BDTI integrated YOLO with OpenCV functions to enable real-time display of detections.

For its third demo, BDTI showed an example of the company's machine learning engineering services. The demo highlights BDTI’s ability to design, train, and implement neural networks for specific use cases. For this demo, BDTI engineers implemented YOLO (You Only Look Once), an approach in which classification and detection is performed by a single neural network in a single pass, resulting in very fast detection. BDTI integrated YOLO with OpenCV functions to enable real-time display of detections.  At the 2018 Consumer Electronics Show in Las Vegas, Pulin Desai, Product Marketing Director at Cadence, demonstrated an extremely lightweight implementation of a YOLO-based neural network trained for people detection, which was designed and optimized by BDTI for Cadence’s Tensilica Vision P6 DSP. BDTI's implementation was optimized for on-device AI applications with reduced memory footprint and bandwidth requirements.

At the 2018 Consumer Electronics Show in Las Vegas, Pulin Desai, Product Marketing Director at Cadence, demonstrated an extremely lightweight implementation of a YOLO-based neural network trained for people detection, which was designed and optimized by BDTI for Cadence’s Tensilica Vision P6 DSP. BDTI's implementation was optimized for on-device AI applications with reduced memory footprint and bandwidth requirements.- BDTI demoed three applications at 2018 Embedded Vision Summit.

The first demo demo showcases BDTI's expertise in design and development of vision-based applications. BDTI engineers implemented a version of the MobileNet-SSD neural network for detecting people within the video stream, then used the Intel RealSense SDK to calculate object size and distance using the data provided by the RealSense D415 stereo camera. This application was created as part of an evaluation of the RealSense SDK. BDTI's report on the RealSense SDK can be downloaded. (Spoiler: BDTI found the SDK straightforward and easy to use.)

The first demo demo showcases BDTI's expertise in design and development of vision-based applications. BDTI engineers implemented a version of the MobileNet-SSD neural network for detecting people within the video stream, then used the Intel RealSense SDK to calculate object size and distance using the data provided by the RealSense D415 stereo camera. This application was created as part of an evaluation of the RealSense SDK. BDTI's report on the RealSense SDK can be downloaded. (Spoiler: BDTI found the SDK straightforward and easy to use.)

The second demo shows the real-time performance of Google's 3D sensing algorithms on the Lenovo Phab 2 Pro smartphone. The first commercial product with Google’s 3D sensing technology, the Phab 2 Pro is the result of BDTI’s unique ability to understand complex compute-intensive computer vision algorithms and map them efficiently onto specialized processor architectures. To obtain real-time performance, BDTI engineers carefully re-architected the algorithms to take advantage of the heterogeneous processing resources on the Qualcomm Snapdragon 652 processor used in the Lenovo phone.

The second demo shows the real-time performance of Google's 3D sensing algorithms on the Lenovo Phab 2 Pro smartphone. The first commercial product with Google’s 3D sensing technology, the Phab 2 Pro is the result of BDTI’s unique ability to understand complex compute-intensive computer vision algorithms and map them efficiently onto specialized processor architectures. To obtain real-time performance, BDTI engineers carefully re-architected the algorithms to take advantage of the heterogeneous processing resources on the Qualcomm Snapdragon 652 processor used in the Lenovo phone.

The third demo shows an extremely lightweight implementation of a neural network trained for people detection. Starting with the open-source YOLO (You Only Look Once) network, BDTI pruned layers and performed 8-bit quantization, creating an optimized implementation with reduced memory footprint and bandwidth requirements for on-device AI applications. The implemenation is shown running on the Cadence Tensilica Vision P6 DSP processor.

The third demo shows an extremely lightweight implementation of a neural network trained for people detection. Starting with the open-source YOLO (You Only Look Once) network, BDTI pruned layers and performed 8-bit quantization, creating an optimized implementation with reduced memory footprint and bandwidth requirements for on-device AI applications. The implemenation is shown running on the Cadence Tensilica Vision P6 DSP processor.

BDTI can design a computer vision-enabled product or add vision capabilities to your products faster and with less risk than you may have thought possible. And that translates to faster time to market and increased revenue! Contact us today to discuss how computer vision can enhance your products.

For a confidential consultation, call us at +1 925 954 1411 or contact us via the web.