Xilinx, like many companies, sees a significant opportunity in burgeoning deep neural network applications, as well as those that leverage computer vision...often times, both at the same time. Last fall, targeting acceleration of cloud-based deep neural network inference (when a neural network analyzes new data it’s presented with, based on its previous training), the company unveiled its Reconfigurable Acceleration Stack, an application-tailored expansion of its original SDAccel development toolkit. Similarly, Xilinx has now expanded its SDSoC toolset, originally unveiled in March 2015, to focus on machine intelligence "at the edge" (i.e. in standalone and network-client products) via the new reVISION stack tailored for both "classic" computer vision algorithm acceleration and emerging deep learning inference processing.

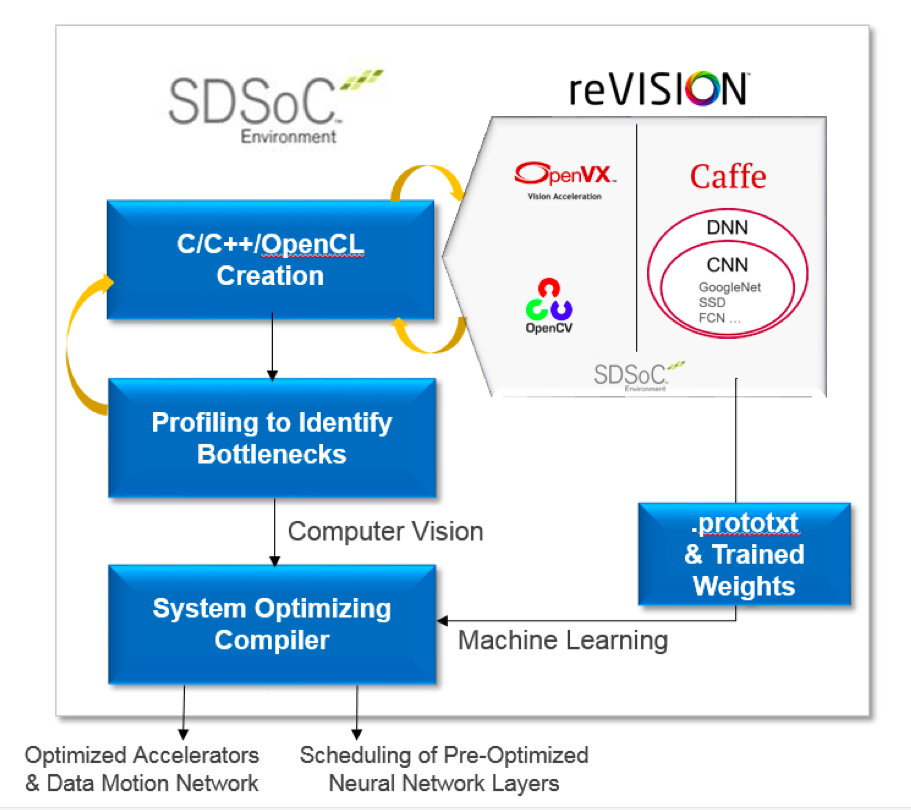

Computer vision algorithm acceleration begins with SDSoC, an Eclipse-based C, C++ and (newly added) OpenCL environment intended to enable software engineers to create FPGA co-processors via a familiar programming model. SDSoC supports an iterative design flow in which the developer selectively migrates portions of the application code to the FPGA, either in the form of a standalone chip or the programmable logic portion of a Zynq single- or multi-core ARM-based SoC. By profiling code in SDSoC, developers can identify bottlenecks and label functions that they want to accelerate and otherwise hardware-optimize. SDSoC's optimizing compiler then creates an accelerated implementation, including both the processor/accelerator interface and software drivers.

After obtaining comparative latency, throughput, power consumption, and resource utilization estimates for various design variants, a developer can settle on one that achieves the desired balance between software running on the ARM and accelerator hardware, in order to address specific design requirements. The reVISION stack augments SDSoC with several dozen acceleration-ready OpenCV functions for computer vision processing; OpenVX framework support is scheduled for later this year, as is ongoing expansion of the OpenCV acceleration library.

The reVISION stack's acceleration support for deep learning inference is currently focused on the Caffe framework, with TensorFlow support planned for the future. It includes support for neural networks such as AlexNet, GoogLeNet, SqueezeNet, SSD, and FCN. The reVISION stack also provides an extensive set of deep learning library elements, including pre-defined and optimized CNN (convolutional neural network) layer implementations that can be used to build custom neural networks. As Vinod Kathail, distinguished engineer and embedded vision team leader at Xilinx, explained in a recent briefing, after a developer leverages the Caffe framework to initially train a neural network, the Caffe-generated .prototxt file then configures the reVISION stack's ARM-based software scheduler, which controls the FPGA-optimized inference accelerators.

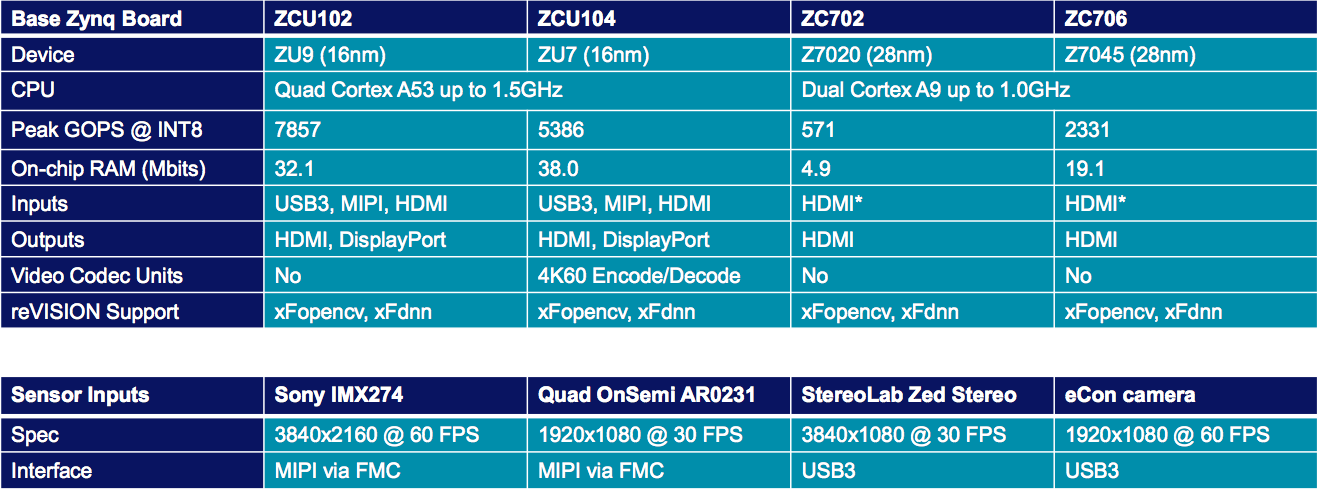

The reVISION stack's development process concludes with a system-optimizing compiler, used to finalize both the FPGA configuration and the CPU software image. This same compiler is capable of creating an optimized "fused" implementation, merging the outputs of both the classic computer vision and deep learning inference acceleration flows within the reVISION stack, in cases when both development techniques are employed in a design (Figure 1). And the reVISION stack's software resources are complemented by a suite of development boards from Xilinx (supporting both Zynq-7000 SoCs and Zynq UltraScale+ MPSoC devices), along with image sensors and cameras from partner companies.

Figure 1. The reVISION stack augments the SDSoC iterative design flow and optimizing compiler with FPGA-tailored utilities, functions and other software elements targeting both classic computer vision and deep learning inference acceleration (top). Hardware from both Xilinx and partners complements reVISION's software aspects (bottom).

Xilinx's reVISION webinar, "Caffe to Zynq: State-of-the-Art Machine Learning Inference Performance in Less Than 5 Watts," is now available for free on-demand viewing. A companion reVISION webinar, "OpenCV on Zynq: Accelerating 4k60 Dense Optical Flow and Stereo Vision," will take place on July 12, 2017 at 10 am PT; see here to register (this same link can be used to view the free on-demand webinar subsequent to the live event).

Add new comment