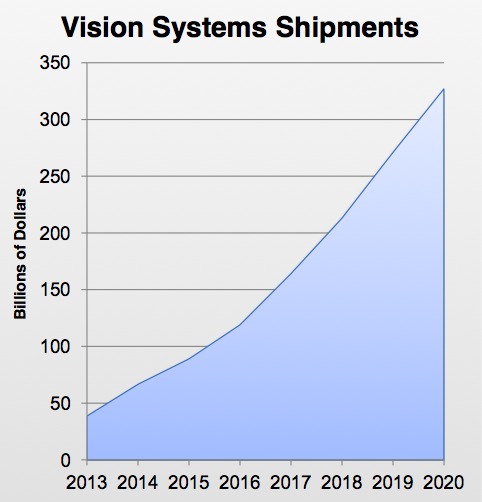

The computer vision market is in a period of dramatic expansion. Market forecasts consolidated by Synopsys attest to the burgeoning adoption of practical computer vision (i.e. "embedded vision") technology (Figure 1) in a range of high-volume products. This growth is fueled by the increasing performance and decreasing cost and power consumption of processors, and by the growing awareness of the value that can be delivered via object detection, tracking, recognition and other vision processing functions.

Figure 1. The embedded vision systems market is forecasted to be more than $300B by 2020, with a 35% compounded annual growth rate.

A variety of processor options are capable of running vision algorithms. On one end of the flexibility-versus-efficiency spectrum are conventional CPUs. Intermediate candidates include GPUs, DSPs, FPGAs. And on the opposite end of the spectrum are specialized vision processors and cores, application-tailored but largely unsuitable for non-vision tasks. Vision-specific licensable processor cores are currently offered by Apical, Cadence (formerly Tensilica), CEVA, CogniVue, and videantis, for example, while vision-specific processor chips are offered by Analog Devices, Movidius, and Texas Instruments.

At first glance, therefore, one might conclude that Synopsys' recently announced DesignWare EV (Embedded Vision) licensable silicon IP processor family is entering a crowded field. Senior Product Marketing Manager Mike Thompson feels, however, that the company brings a unique perspective to the vision-processing ecosystem. At the core of Synopsys' product is a focus on convolutional neural networks (CNNs), a technique that, as Jeff Bier discussed in a recent editorial, is an increasingly popular solution to a host of object recognition and other computer vision challenges.

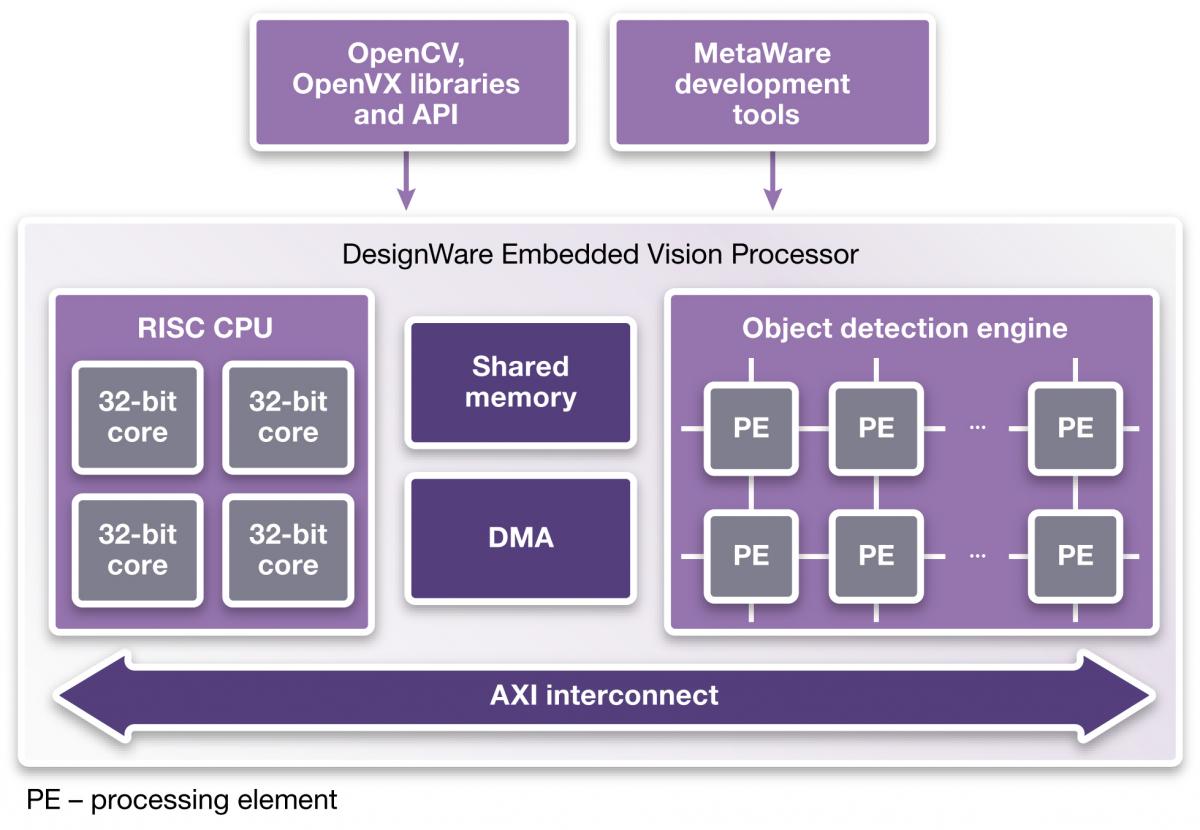

The DesignWare EV family currently consists of two products, the dual-core EV52 and quad-core EV54, the latter taking up approximately 1 square millimeter of silicon area on a 28 nm process (not including associated embedded memory) (Figure 2). Thompson explained that EV processors are not intended for host CPU functions; instead, they were designed to act as vision-focused "offload" coprocessors working in conjunction with a separate general-purpose CPU core (ARC, ARM, MIPS, x86, etc.) integrated within the same SoC. EV cores are capable of running up to 1.6 GHz at 28 nm, according to Synopsys, although for now the company is limiting them to 1 GHz speeds, with the Object Detection Engine running at 500 MHz, half the speed of the cores' RISC CPUs. A single-core EV variant is currently under consideration as an off-the-shelf product option; for now, it can be implemented during the "build" process.

Figure 2. Synopsys believes that its processors' convolutional neural network (CNN) capabilities will enable them to target a significant number of embedded vision applications.

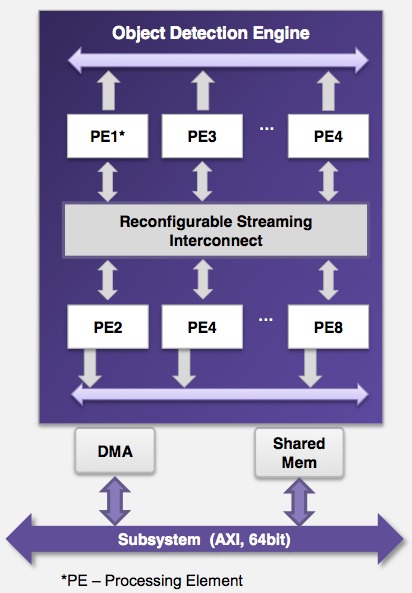

At the nexus of EV processors' convolutional neural network capabilities is the object detection engine, consisting of two, four or eight processing elements (PEs) (Figure 3). The number of PEs is configurable at "build" time and is application-dependent, varying with the required CNN complexity and throughput. For now, the creation (via training with reference images) and porting of a CNN graph to a customer's object detection engine architecture is being handled by Synopsys. Later this year, a customer-usable CNN graph-porting tool is scheduled to become available.

Figure 3. The object detection engine, which executes the trained-and-ported CNN graph, can be customized via an application-dependent number of PE cores and is dynamically reconfigurable for multiple image recognition tasks.

A fully configured EV core variant is capable of 1000 GOPS/watt performance, according to Thompson. More specifically, Synopsys provides several application-specific benchmark results data sets, based on processing 720p high-definition images on the 1 GHz EV52 processor fabricated on a 28 nm HPM (high performance mobile) process (Table 1). Note that the estimated silicon area in these cases is inclusive of both the processor and required embedded memory. The "# Scales" column refers to the number of iterations of pyramid image processing, providing a measure of scene complexity.

|

|

# PEs |

Speed (fps) |

# Scales |

Power consumption (mW) |

Area (mm2) |

|

Face detection |

6 |

30 |

4 |

108 |

2.7 |

|

Speed limit sign detection |

8 |

21 |

1 |

132 |

3.1 |

Table 1. 1 GHz, 28 nm-fabricated EV52 benchmark results from Synopsys

The DesignWare EV family is supported by the OpenCV function library, and all 40 OpenVX vision processing API kernels have also been ported to the cores, according to Thompson, with optimization in process and targeting end-of-summer completion. The company is also developing higher-level face detection, speed limit sign detection and face tracking software reference designs, targeting applications such as advanced driver assistance systems (ADAS) and security systems. And a C/C++ MetaWare development toolkit is also available for kernel programming of EV processor cores.

Synopsys' introduction of the DesignWare EV family of cores demonstrates that Synopsys considers embedded vision to be an important element of new chip designs. In this, Synopsys has plenty of company, with numerous competitors also offering licensable processor cores for vision applications. Where Synopsys diverges from its competitors is in putting its primary focus on implementing convolutional neural networks. Recent published results show that CNNs often beat out conventional "engineered" vision algorithms on object recognition tasks. However, these published results are typically based on CNN implementations running on large GPU-accelerated server clusters.

4/25/15: This article has been updated to clarify Movidius' role as a vision processor chip supplier, and to add mention of Analog Devices and Texas Instruments as vision processor chip suppliers.

4/29/15: This article has been updated to clarify the development status of Synopsys' OpenVX support.

5/5/15: This article has been updated to clarify that the DesignWare EV cores and their RISC processors run at 1 GHz, with the Object Detection Engine running at 500 MHz, as well as to further clarify the status of both OpenCV and OpenCX support. The benchmark results shown in Table 1 have also been updated.

Add new comment